AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Web scraper click button1/11/2024

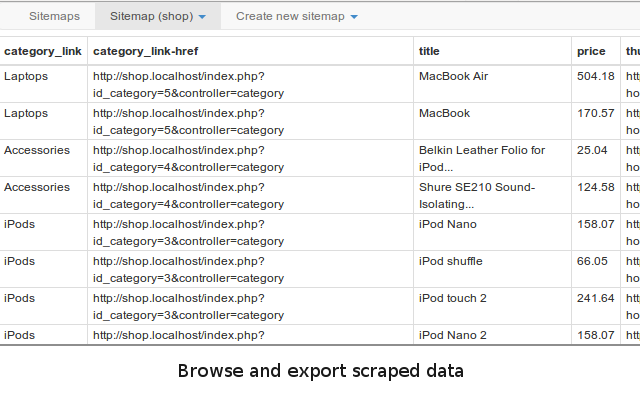

So, to start with infinite scrolling web-pages scraping follow these steps : So scraping data from infinite scrolling pages will be a bit different then usual next-previous pagination we see at the start of this post, where we just clicked on Next button to load the next page and continue scraping until it was not there. Then, the response handler function parses the response and appends to the list/search container on the web-page to keep showing more and more items when the user scrolls down to the page. So, a typical infinite scrolling page sends a HTTP GET or POST request to the server in the background, to fetch the data. Go to the web page you want to crawl and find the unique CSS selector of next button using Agenty Chrome extension or manually by inspecting the element in Chrome Developer Toolįor example, I am using the extension in the below example and found a.next is the unique selector for the next button in this page to click. It has a next button right at the bottom of the page.Īnd click on the button, you can easily find the CSS selector of this button or can view it in source/inspect element if you are friendly with Chrome Developer tools.For example, this web-page in screenshot : Next button pagination is most commonly used pagination in many websites and has a Button (or hyperlink) with “Next” option to click and go to the next page. So the web scraping with pagination will keep running until it reaches to the maximum pages limit you set or the next button invisible/disabled on the web page. Page limits : Maximum number of pages needs to be paginated - The maximum number can be anything like 100 or 1000 but the web scraper will exit the pagination if the “Next” button is not found, or disabled, or reached the end of the page.Script : Advance JavaScript expression for developer to write your own code for pagination to handle complex sites.Next page selector : The unique CSS selector of Next button - The agent will click on that button to paginate until that button is hidden or disabled.Pagination type : Click, Infinite-Scroll or Load-More - The type of pagination you want to run in your scraping agent.With this, you’ll be able to scrape more diverse and complex websites across the web. Such interactions include moving the mouse (virtually), scrolling, clicking on elements, and typing text into fields.

While many tools exists, Selenium is indispensable, allowing you to launch and control a web browser to mimic human interactions. Web scraping is a valuable skillset for data acquisition, allowing you to generate new datasets from information across the web. Scroll = np.random.uniform( 2, 69) * np.sign(to_go)ĭriver.execute_script( "window.scrollBy(0, )".format(scroll))Ĭur_dom_top = driver.execute_script( "return ") While np.abs(cur_dom_top - desired_dom_top) > 70: Start_dom_top = driver.execute_script( "return ")Įlement_location = element.locationĭesired_dom_top = element_location - window_height/ 2 #Center It! Window_height = driver.execute_script( "return window.innerHeight") """Mimics human scrolling behavior and will put the element with 70 pixels of the center of the current viewbox.""" Then after the standard `pip install selenium`, you can turn to your favorite Python environment and start a web scraping session, being sure to specify the file path of the driver like this: To start, you’ll need to download the appropriate Selenium WebDriver for the web browser of your choice. Originally designed to help developers create and run tests of their websites, it is also incredibly useful for scraping those same sites. If you aren’t familiar with Selenium, it allows you to manipulate and control a web browser.

In these scenarios, older tools such as BeautifulSoup may not be enough. This contrasts with older methods of navigating to a new URL and making an additional HTTP request. With the rise of AJAX, many of today’s websites (including the likes of Netflix and AirBnB) use React.js or similar frameworks to build interactive interfaces where the DOM itself is updated fluently based on user interactions. So you’ve tried to scrape some data from the latest website, only to realize your current tool set of parsing HTML pages no longer suffices. Clicking, Typing, Hovering and Scrolling with Selenium Clicking Buttons Entering Text Moving the Mouse Scrolling Conclusion

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed